Category: Methods

All topics

-

Do online consultations make citizens more satisfied with local democracy?

We find that giving citizens an opportunity to have a say in political decisions influences…

-

How easy is it to research the Chinese web?

The research expectations seem to be that control and intervention by Beijing will be most…

-

Can text mining help handle the data deluge in public policy analysis?

There has been a major shift in the policies of governments concerning participatory governance—that is,…

-

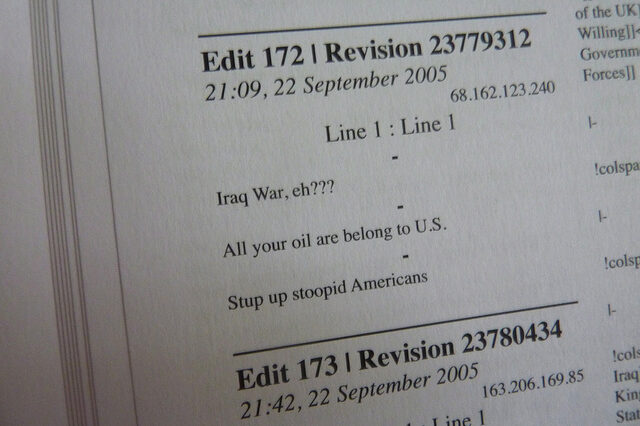

Harnessing ‘generative friction’: can conflict actually improve quality in open systems?

The more that differing points of view and differing evaluative frames came into contact, the…