Tag: open data

All topics

-

Open government policies are spreading across Europe—but what are the expected benefits?

Mapping out the different meanings of open government, and how it is framed by different…

-

Using Open Government Data to predict sense of local community

Advocates hope that opening government data will increase government transparency, catalyse economic growth, address social…

-

Online crowd-sourcing of scientific data could document the worldwide loss of glaciers to climate change

The platform aims to create long-lasting scientific value with minimal technical entry barriers—it is valuable…

-

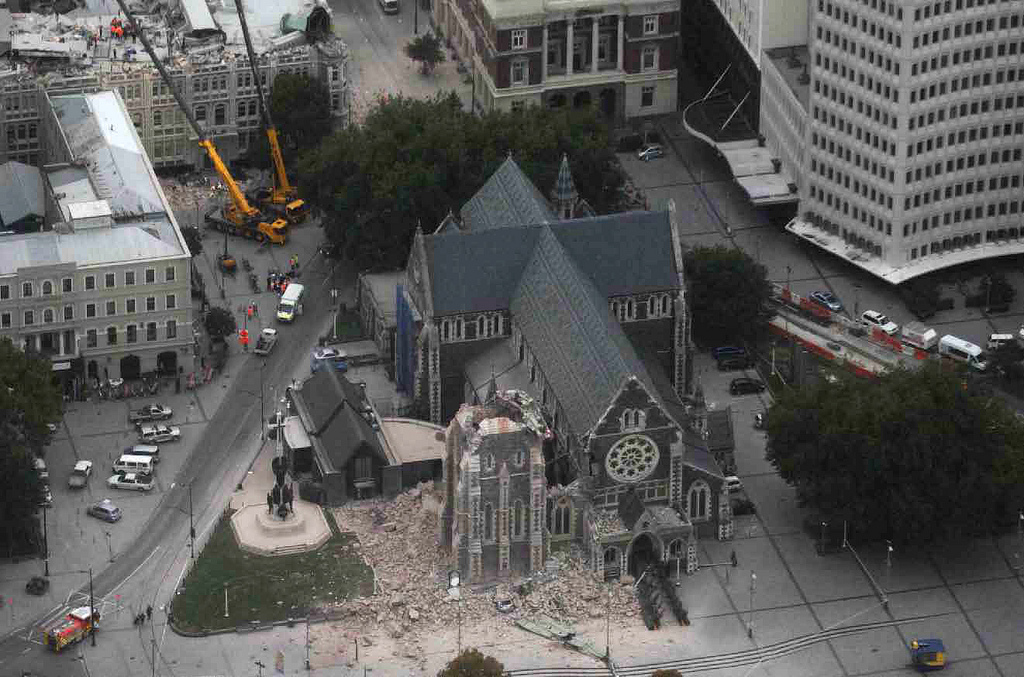

Preserving the digital record of major natural disasters: the CEISMIC Canterbury Earthquakes Digital Archive project

The Internet can be hugely useful to coordinate disaster relief efforts, or to help rebuild…