Tag: collective action

All topics

-

Do Finland’s digitally crowdsourced laws show a way to resolve democracy’s “legitimacy crisis”?

Discussing the digitally crowdsourced law for same-sex marriage that was passed in Finland and analysing…

-

Finnish decision to allow same-sex marriage “shows the power of citizen initiatives”

It is the first piece of “crowdsourced” legislation on its way to becoming law in…

-

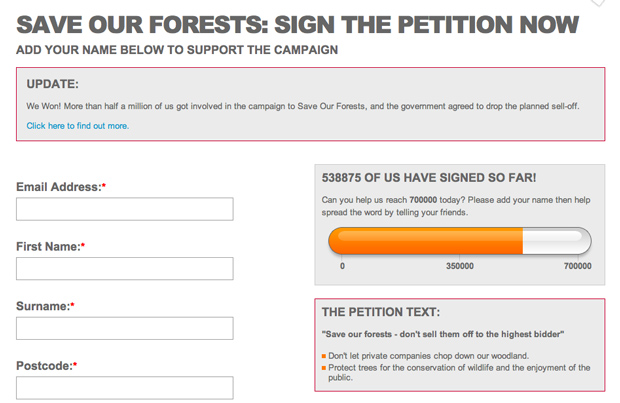

Presenting the moral imperative: effective storytelling strategies by online campaigning organisations

Existing civil society focused organisations are also being challenged to fundamentally change their approach, to…