Tag: censorship

All topics

-

Monitoring Internet openness and rights: report from the Citizen Lab Summer Institute 2014

Informing the global discussions on information control research and practice in the fields of censorship,…

-

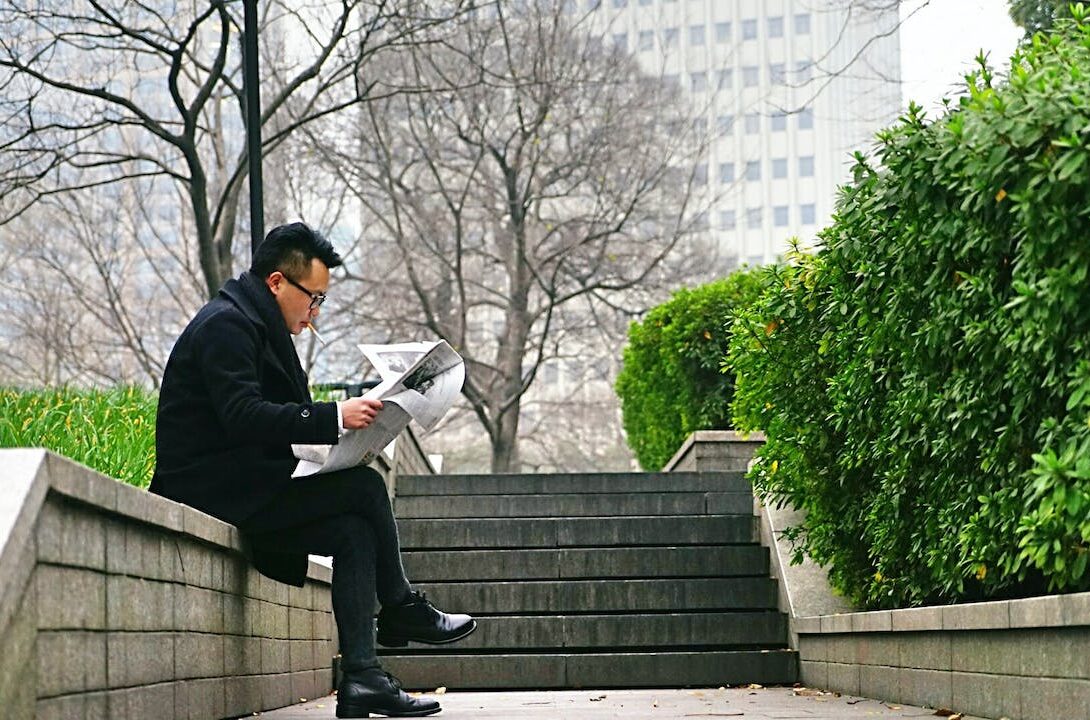

How easy is it to research the Chinese web?

The research expectations seem to be that control and intervention by Beijing will be most…

-

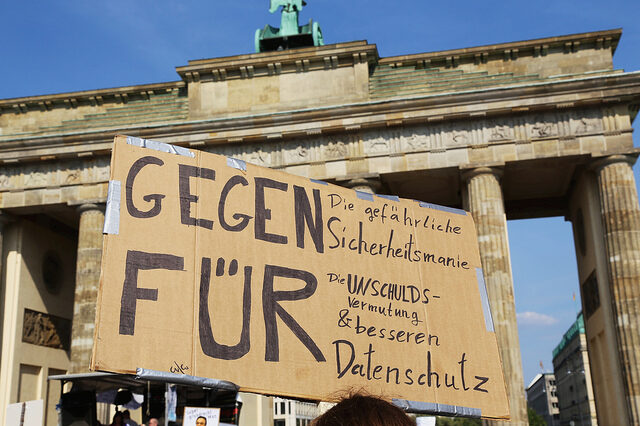

Uncovering the patterns and practice of censorship in Chinese news sites

Is censorship of domestic news more geared towards “avoiding panics and maintaining social order”, or…

-

The complicated relationship between Chinese Internet users and their government

Chinese citizens are being encouraged by the government to engage and complain online. Is the…

-

How effective is online blocking of illegal child sexual content?

Combating child pornography and child abuse is a universal and legitimate concern. With regard to…