Tag: data protection

All topics

-

New Voluntary Code: Guidance for Sharing Data Between Organisations

For data sharing between organisations to be straight forward, there needs to a common understanding…

-

Does a market-approach to online privacy protection result in better protection for users?

Examining the voluntary provision by commercial sites of information privacy protection and control under the…

-

The Future of Europe is Science and ethical foresight should be a priority

– in EthicsHow will we keep healthy? How will we live, learn, work and interact in the…

-

Designing Internet technologies for the public good

People are very often unaware of how much data is gathered about them—let alone the…

-

Unpacking patient trust in the “who” and the “how” of Internet-based health records

Key to successful adoption of Internet-based health records is how much a patient places trust…

-

Ethical privacy guidelines for mobile connectivity measurements

Measuring the mobile Internet can expose information about an individual’s location, contact details, and communications…

-

The scramble for Africa’s data

– in DevelopmentAs Africa goes digital, the challenge for policymakers becomes moving from digitisation to managing and…

-

Time for debate about the societal impact of the Internet of Things

As the cost and size of devices falls and network access becomes ubiquitous, it is…

-

eHealth: what is needed at the policy level? New special issue from Policy and Internet

Policymakers wishing to promote greater choice and control among health system users should take account…

-

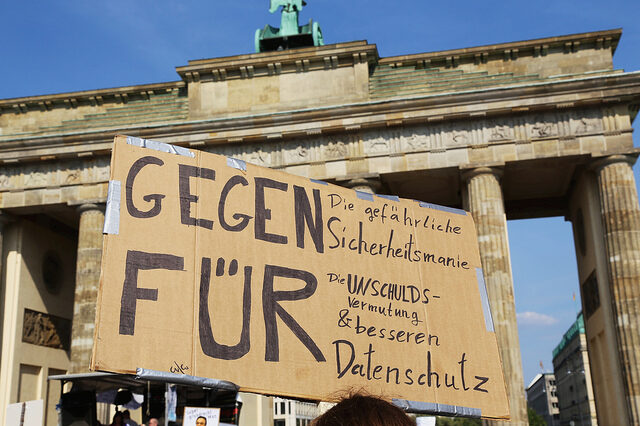

Personal data protection vs the digital economy? OII policy forum considers our digital footprints

The fact that data collection is now so routine and so extensive should make us…